Twitter wants you to revise that mean tweet

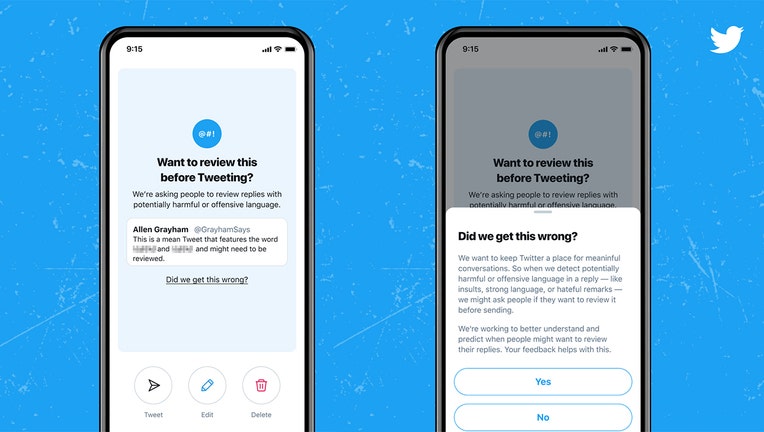

Screenshots of a feature on the Twitter app. (Courtesy of Twitter)

NEW YORK - Twitter is asking users to take a moment, take a deep breath, and consider rewriting mean or offensive tweets. Well, maybe not the deep-breathing part but at least be nice and think twice.

But who decides what could be mean or offensive? Twitter's algorithms. And those programs aren't always right, of course. Sometimes they're overzealous.

"We began testing prompts last year that encouraged people to pause and reconsider a potentially harmful or offensive reply — such as insults, strong language, or hateful remarks — before Tweeting it," Twitter said in a new blog post. "Once prompted, people had an opportunity to take a moment and make edits, delete, or send the reply as is."

But Twitter admitted that at first, the software was probably too bashful and prudish — meaning it couldn't tell if you were being a jerk or just messing around with a friend.

"In early tests, people were sometimes prompted unnecessarily because the algorithms powering the prompts struggled to capture the nuance in many conversations and often didn't differentiate between potentially offensive language, sarcasm, and friendly banter," Twitter said. "Throughout the experiment process, we analyzed results, collected feedback from the public, and worked to address our errors, including detection inconsistencies."

The tech has improved enough to be rolled out more widely, Twitter said. The social media company also said that the testing showed that the prompts did encourage many users to back down from potentially mean tweets.

"If prompted, 34% of people revised their initial reply or decided to not send their reply at all," Twitter said. "After being prompted once, people composed, on average, 11% fewer offensive replies in the future."

The feature is coming first to iOS and Android users that have enabled English-language settings.